I hacked LTX2 to be used as a Multi Lingual TTS voice cloner

Took me a bit but I figured it out. The idea is to geneate a very low resolution (64×64) video with input audio and mask the audio latent space after some time using “LTXV Set Audio Video Mask By Time”. So the audio identity is set up in the first 10 seconds and then the prompt continues the speech.

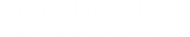

The initial voice is preserved this way. and at the end you just cut the first 10 seconds. It works with a 20 seconds audio sample of the voice and can get 10 clean seconds. Trying to go beyond that you run into problems but the good thing is you can get much better emotions by prompting smething like “he screams in perfect romanian language” or whatever emotions you want to add. No other open source model knows so many languages and for my needs, romanian, it works like a charm. Even better then elevenlabs I would say. Who would have known the best open source TTS model is a Video model ?Workflow is here